DigiCortex 1.0 Released - Visualization with Oculus Rift

Click here to download DigiCortex Demo...

DigiCortex Engine v1.0 brings support for neural network visualizations through Oculus Rift next-generation virtual reality headset.

With Oculus Rift, visualizations of simulated brain activity are completely immersive, with full support for head-tracking. It is possible to "enter" inside the simulated brain and zoom in on any part of it and observe the neural activity. We plan to add lots of further enhancements to the Oculus Rift visualization mode, including interactive neuron picking and "floating" statistics of neural activity (fMRI, EEG, synaptic conductances).

At the moment, Oculus Rift development kits are supported including usage of the HMD sensors for 3D scene navigation. Correction for lens distortion is also applied as a rendering post-processing step.

How to enable Oculus Rift visualization

Currently, we support Oculus Rift in multi-monitor mode (no switch to full-screen). Adding -oculus command line parameter will enable Oculus Rift visualization. Oculus Rift is currently hardcoded and the headset+sensor will be automatically detected. We plan to add more configuration options in the future.

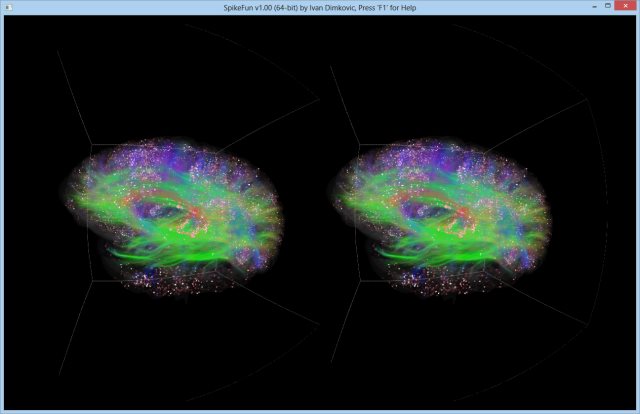

Here is an example of running a small simulation in 64-bit mode with Oculus Rift:

SpikeFun64.exe -project demoSmall.xml -ministhalamus -noresize -oculus -demo

With this command line, DigiCortex engine will render in a Oculus Rift mode (see picture below). By default and in order to increase the rendering quality on the Oculus Rift development kits, DigiCortex visualization engine will super-sample two times (so, for a 1280x800 target resolution, rendering buffer will be 2560x1600) It is also possible to override supersampling factor by specifying custom supersampling factor with -osfac switch (e.g. -osfac 4)

End Result

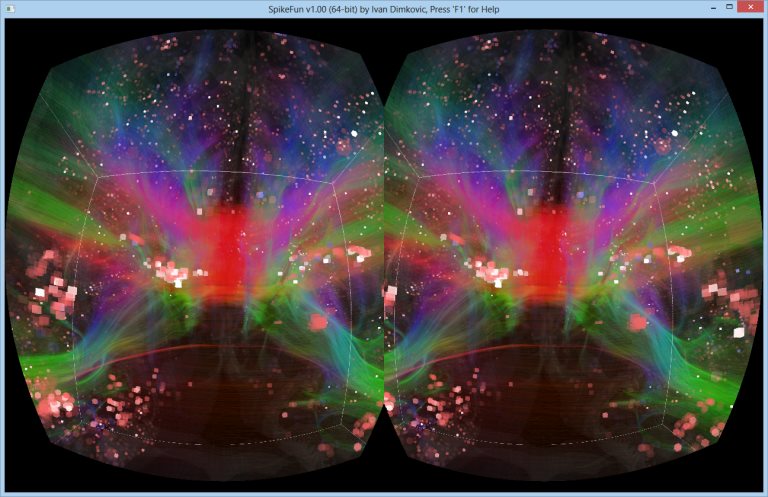

Of course, without Oculus Rift it is impossible to describe the effect of being "in" the simulation. Picture below shows what is presented to both eyes, and how the distortion correction post-processing filter "looks", but it simply cannot even begin to describe how does the view through Oculus Rift look like. So... get your kit now!

Another view, now from "inside" the brain looking at corpus callosum tract bundle (red color) from a vantage point inside the visual cortex:

- Log in to post comments